Microservices changed the way tech architects approached software development, allowing them to break free from the chains of monolithic architecture and compartmentalize development.

This makes software development more efficient, easily scalable, and more error-free.

However, microservices scaling needs to be a carefully planned process to ensure the right components receive the resources they need when they need them. Here’s a comprehensive guide to the process of microservices scaling.

Table of Contents

What is Microservices Scalability?

Microservices scalability refers to the ability of a microservices-based architecture to efficiently handle varying workloads and demands. In contrast to monolithic architectures, microservices provide a modular approach, allowing independent development, testing, and deployment.

Scaling in microservices involves thoughtful adjustments to resources, ensuring that each service can handle its specific load requirements independently.

In microservices, scalability is crucial for achieving flexibility, scalability, and resilience. The traditional monolithic scaling approach becomes inefficient, as it involves scaling the entire application even when only specific components face increased demand.

Microservices offer a more targeted scaling approach, treating each service as an independent entity. This enables precise scaling based on demand, reducing resource allocation inefficiencies.

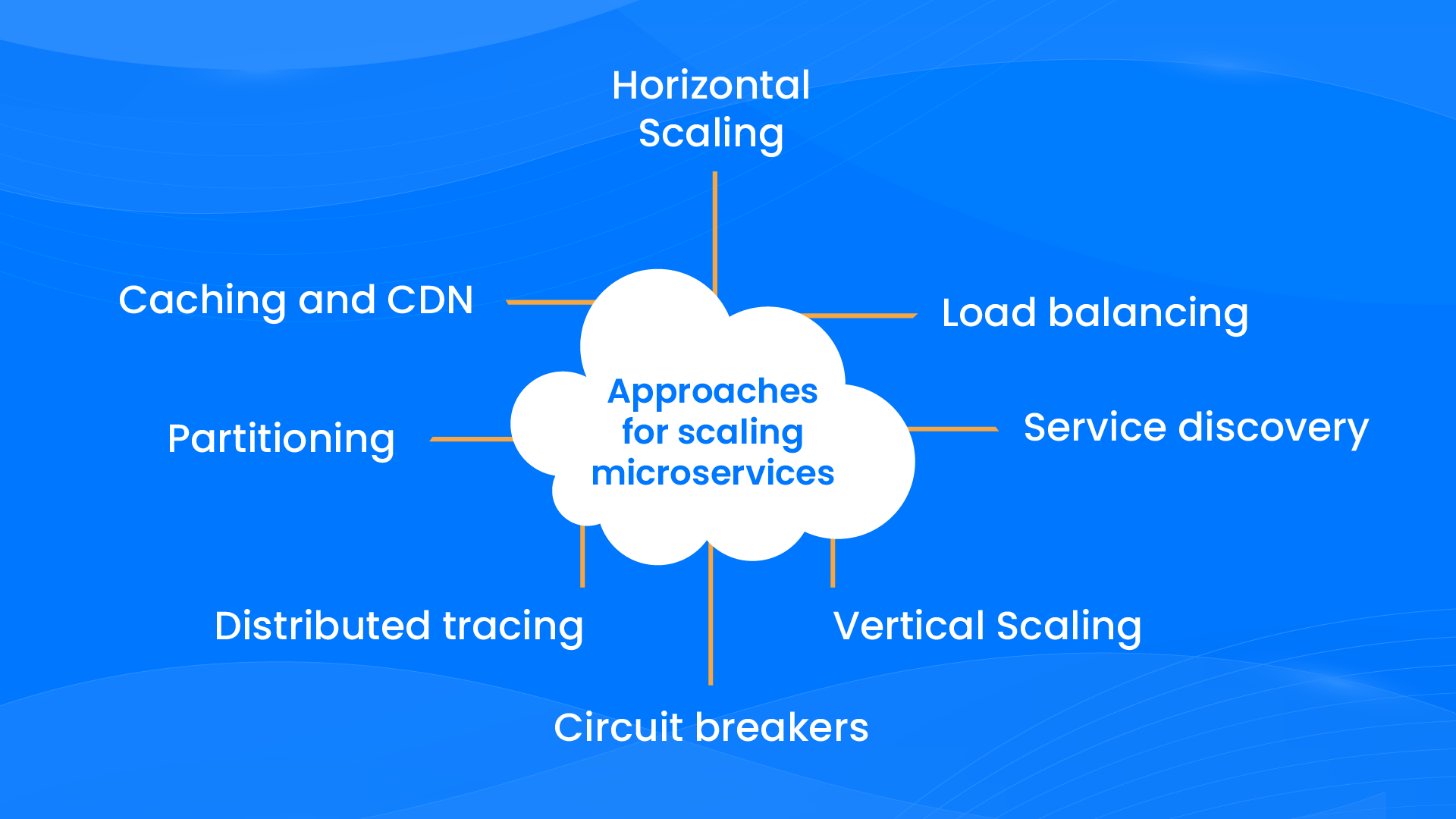

Different Approaches for Scaling Microservices

There are 8 different approaches to achieve microservices scalability. Let’s take a quick look at what they are.

1. Horizontal Scaling

Horizontal scaling involves adding more instances of a service to distribute the load efficiently. Load balancing and service discovery mechanisms play a crucial role in achieving this, ensuring even distribution of traffic across multiple instances.

2. Load balancing

Load balancing ensures the equitable distribution of incoming network traffic among multiple instances, preventing the overloading of any single instance.

3. Service discovery

Service discovery mechanisms dynamically register and locate services, facilitating efficient communication and load balancing in a dynamic microservices environment.

4. Vertical Scaling

Vertical scaling focuses on increasing the resource capacity of a single instance, making it suitable for services with specific resource requirements or limited horizontal scaling options.

5. Circuit breakers

Circuit breakers help prevent cascading failures by detecting and isolating faulty services, ensuring the overall system’s resilience.

6. Distributed tracing

Distributed tracing allows tracking and monitoring of microservices interactions, providing insights into performance bottlenecks and potential improvements.

7. Partitioning

Partitioning involves breaking down tasks into smaller pieces, allowing parallel processing of tasks and enhancing scalability.

8. Caching and CDN

Caching and Content Delivery Networks (CDNs) improve performance by reducing latency and bringing data closer to end-users, which is particularly beneficial when dealing with querying data and delivering static content.

How to Analyze the Need for Microservices Scalability

Let’s now dive into how organizations should analyze when they need to scale their microservices.

Identify Bottlenecks by Monitoring Tools

Monitoring tools play a pivotal role in assessing the health and efficiency of microservices. Here’s how businesses can use them to identify bottlenecks.

- Identify Usage Patterns: Understand how users interact with different microservices, detecting patterns in usage and demand.

- Analyze Network Latency: Measure the time it takes for data to travel between microservices, identifying areas where latency may hinder performance.

- Spot Performance Bottlenecks: Pinpoint areas within the microservices architecture where performance is suboptimal, leading to bottlenecks during increased loads.

By leveraging monitoring tools, businesses gain visibility into the overall system performance, helping them identify specific bottlenecks that may impede scalability.

Track Performance Metrics

Tracking performance metrics provides quantitative insights into the efficiency and responsiveness of microservices. Key metrics include:

- Response Time: Measure the time it takes for a microservice to respond to a request. High response times may indicate a need for optimization or additional resources.

- Throughput: Evaluate the volume of transactions or requests processed by a microservice over a given time. A decrease in throughput may signal a scalability challenge.

- Error Rates: Monitor the frequency of errors occurring within microservices to identify potential issues affecting user experience.

By analyzing these metrics, businesses can discern which specific microservices may require additional resources or optimization to maintain optimal performance.

Incorporate User Feedback

User feedback is a qualitative source of information that offers valuable insights into the end-user experience. Here are examples of what user feedback can help you glean.

- Gather Insights into User Experience: Understand user satisfaction, frustrations, and areas of improvement through direct feedback.

- Identify Pain Points: Users often highlight specific functionalities or services that may be experiencing performance issues.

- Inform Optimization and Scaling Strategies: User feedback provides a human-centric perspective, guiding businesses in prioritizing areas for optimization and scaling to enhance the overall user experience.

By incorporating user feedback into the analysis, businesses ensure that scalability efforts align with the actual needs and expectations of their user base, ultimately contributing to a positive and satisfying end-user experience.

How to Scale Microservices

Scaling microservices involves a strategic and multifaceted approach to ensure optimal performance and adaptability to varying workloads. Here are key strategies businesses can employ:

Scale Up Your Bottlenecks First

Identifying and addressing bottlenecks is crucial for effective microservices scaling. Here’s how to do this.

- Identify Bottlenecks: Use monitoring tools to pinpoint specific services experiencing increased demand or performance issues.

- Optimize Resource Allocation: Scale up the specific microservices facing bottlenecks by allocating additional resources, such as computing power or memory, to ensure they can handle the increased load.

This approach ensures that resources are strategically allocated to the services that need them the most, optimizing overall system performance.

Scale Your Dependencies

Consider the dependencies of each microservice to maintain a balanced and efficient system.

- Understand Interdependencies: Recognize how each microservice relies on others for functionality.

- Scale Accordingly: Scale-dependent microservices proportionally to maintain a balance in the system. This prevents situations where certain microservices are overburdened due to the increased load on their dependencies.

Balancing the scaling of microservices and their dependencies contributes to a more cohesive and resilient architecture.

Implement Load Balancing and Service Discovery

Implementing load balancing and service discovery mechanisms is crucial to ensure an even distribution of traffic.

- Load Balancing: Distribute incoming network traffic across multiple microservices instances, preventing overloading of any single instance.

- Service Discovery: Dynamically register and locate microservices, facilitating efficient communication and load balancing in a dynamic microservices environment.

Load balancing and service discovery contribute to a more reliable and responsive microservices architecture.

Leverage Autoscaling and Dynamic Resource Allocation

Leverage autoscaling features and dynamic resource allocation for efficient adaptation to varying workloads.

- Autoscaling: Automatically adjust the number of instances based on resource utilization and predefined rules.

- Dynamic Resource Allocation: Define resource limits and requests, allowing for optimized resource allocation and efficient scaling.

These features, especially when utilized in container orchestration platforms like Kubernetes, enable microservices to adapt seamlessly to changing demands.

Monitor Your Application

Let’s understand why continuous monitoring of the application’s performance is essential.

- Real-time Monitoring: Utilize monitoring tools to track performance metrics and identify potential issues in real-time.

- Proactive Adjustments: Make adjustments as needed based on monitoring data to ensure optimal scalability and responsiveness.

Monitoring provides insights into the ongoing performance of microservices and allows for proactive management of potential challenges.

Use a Container Orchestration Platform

Leveraging container orchestration platforms like Kubernetes offers several advantages.

- Efficient Management: Simplify the management of microservices by automating deployment, scaling, and operation tasks.

- Scalability: Easily scale microservices up or down based on demand, optimizing resource usage.

Container orchestration platforms enhance the efficiency and agility of microservices deployment and scaling processes.

Use a Circuit Breaker Pattern

Implementing the Circuit Breaker Pattern is essential for preventing cascading failures. Here’s how it works.

- Fault Detection: Detect and isolate faulty microservices to prevent them from impacting the overall system.

- Resilience: Ensure the overall system’s resilience and reliability by proactively managing failures.

The Circuit Breaker Pattern is a crucial element in maintaining a robust and fault-tolerant microservices architecture.

Achieve Scalability and Performance with CrossAsyst

We at CrossAsyst have a holistic approach to microservices scalability and its role in modern software architecture.

Our expertise allows our clients to harness the full potential of microservices, allowing for agile development, efficient resource utilization, and seamless scalability in response to evolving business needs.

Get in touch with our team today to build scalable custom software solutions using microservices architecture, allowing you to scale up the services that matter the most, without having to worry about downtime.